Kubeflow Distributed Training

This workshop has been deprecated and archived. The new Amazon EKS Workshop is now available at www.eksworkshop.com.

Distributed Training using tf-operator and pytorch-operator

TFJob is a Kubernetes custom resource that you can use to run TensorFlow training jobs on Kubernetes. The Kubeflow implementation of TFJob is in tf-operator. Similarly, you can create PyTorch Job by defining a PyTorchJob config file and pytorch-operator will help create PyTorch job, monitor and keep track of the job.

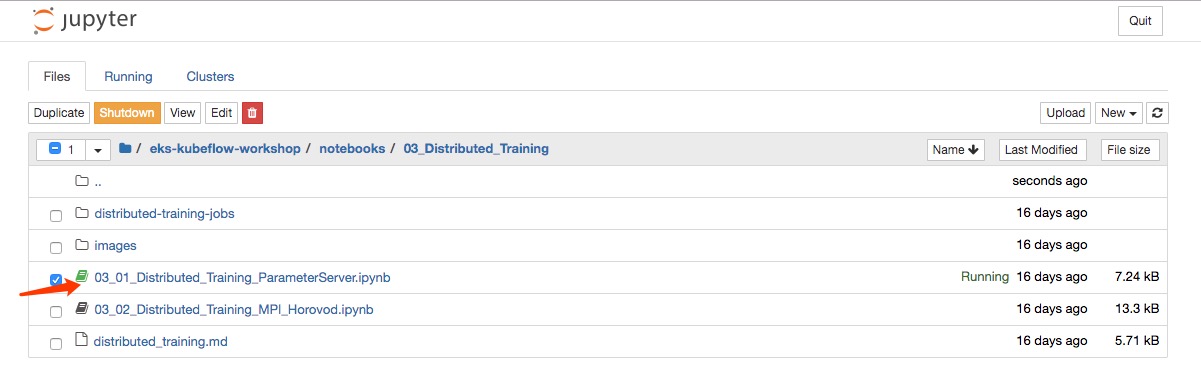

Go to your eks-kubeflow-workshop notebook server and browse for distributed training notebooks (eks-workshop-notebook/notebooks/03_Distributed_Training/03_01_Distributed_Training_ParameterServer.ipynb). If you haven’t installed notebook server, review fairing chapter and finish the clone the repo instructions.

You can go over basic concepts of distributed training. In addition, we prepare distributed-tensorflow-job.yaml and distributed-pytorch-job.yaml for you to run distributed training jobs on EKS. You can follow guidance to check job specs, create the jobs and monitor the jobs.

Starting from here, its important to read notebook instructions carefully. The info provided in the workshop is lightweight and you can use it to ensure desired result. You can complete the exercise by staying in the notebook